Just add noise: improving AI decision-making

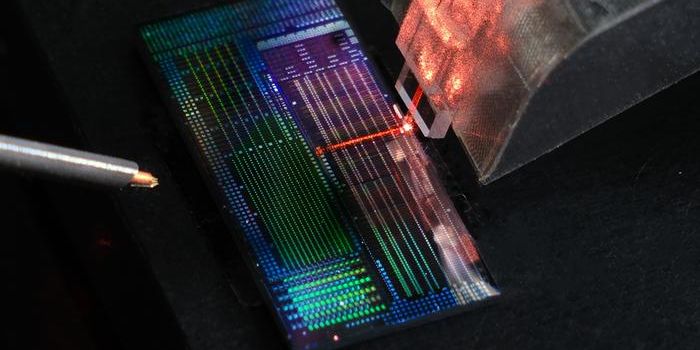

New work on artificial intelligence from a collaboration between the University of Texas at San Antonio (UTSA), the University of Central Florida (UCF), the Air Force Research Laboratory (AFRL) and SRI International improves the way that AI learns. The method alters the way that machine learning decisions are made by adding noise (also called pixilation) into multiple layers of a neural network.

"It's about injecting noise into every layer," said lead researcher Sumit Jha, a professor in the Department of Computer Science at UTSA. "The network is now forced to learn a more robust representation of the input in all of its internal layers. If every layer experiences more perturbations in every training, then the image representation will be more robust and you won't see the AI fail just because you change a few pixels of the input image."

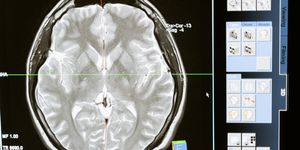

This work builds on Jha’s team’s past investigations into AI safety, research which they presented in 2019 at the AI Safety workshop and the International Joint Conference on Artificial Intelligence (IJCAI). They say that developing "explainable AI,” which refers to artificial intelligence that has a high level of assurance, is imperative to trust this form of technology for applications such as medical imaging and autonomous driving.

The development from this team involves the use of stochastic differential equations (SDEs) overneural ordinary differential equations (ODEs) within a network. While ODEs train a machine with one input through one network which then moves throughout layers to create one response in the output layer, SEDs learn from a set of images as a result of the injection of noise in multiple layers of the neural network.

The team plans to present this advancement, which they describe in the paper "On Smoother Attributions using Neural Stochastic Differential Equations," at the next IJCAI.

"I am delighted to share the fantastic news that our paper on explainable AI has just been accepted at IJCAI," Jha exclaims. "This is a big opportunity for UTSA to be part of the global conversation on how a machine sees."

Sources: USTA, Eureka Alert