Brain Mapping The Physicists' Way (Part I)

The human brain is often considered the holy grail of medical research. But there is increasing interest among theoretic physicists who wish to study brain a little differently. Speaking to a packed audience in CERN's theory department, Vijay Balasubramanian a biophysicist and neuroscientist from the University of Pennsylvania described his unique angle to probe the one of most studied yet least understood organ.

Balasubramanian is a theoretical particle physicist by training. He also worked on the UA1 collaboration at CERN's Super Proton Synchrotron, in which the W and Z bosons were discovered. Today his research ranges from string theory to theoretical biophysics, where he applies methodologies common in physics to model the neural topography of information processing in the brain.

In his presentation, Balasubramanian pointed out that the brain makes up just 2% of our body weight but represents 20% of our metabolic load. The brain consumes just 12 watts of power, seven times less than a typical laptop computer, yet boasts significantly more computational power harnessed to perform subtler functions.

To put things in perspective, let’s take a look at how much energy an AI-based system would consume. In this year’s CES, Nvidia the graphic and AI technology giant showcased a new processor called Xavier. With an eight-core CPU, 512-core GPU, deep learning accelerator, computer vision accelerators, and 8K video processors, Xavier can help drive level 2 or 3 self-drive vehicle. But it consumes 30 watts of power, more than twice of a human brain. For a higher level (i.e., level 4) autonomous car, Nvidia estimated that it would require a multi-processors-powered system that consumes up to 500 watts. The ensembles or circuits of neurons, something more than just a biological concept of tissues, are what allows human brain to put out high performance with the minimum amount of energy.

Compared to the brain's structure, which is reasonably well understood, the cooperative action of many specialized neurons and the circuits they form through interaction is still largely unclear. The predictions made by Balasubramanian's mathematic models are turning out to describe the brain's circuits rather well. His calculations agreed well with the reality: the brain of human and animals at the top of evolutionary ladder gets the biggest cognitive capability with the least possible number of neurons, therefore explaining the high energy efficiency.

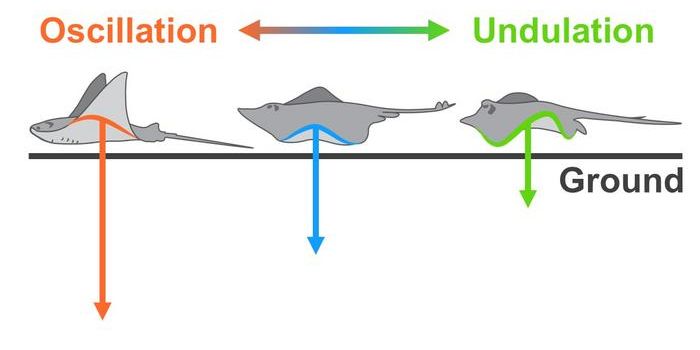

On a similar note, in a paper published in the journal Frontiers in Neural Circuits last October, researchers described their modeling of the neural network within neocortex columns and theory for how the brain learns models of objects through movement. They found that the brain calculates an “allocentric” location (meaning it is a location relative to the object being sensed) for features of any given object.

In their theory, specific layers in every cortical region receive sensory features and allocentric locations both a sensory signal and the location signal. Thus, the input layer knows both what feature it is sensing and where the sensory feature is about the object of perception. The output layer learns complete models of objects as a set of features at locations. What’s fascinating is that this is similar to how computer-aided-design algorithms depict multi-dimensional objects.

The physicists' approach of modeling gave researchers new insights into the possible principles how the brain functions, which is essential for creating general artificial intelligence and sophisticated robotics.

A Theory of How Columns in the Neocortex Enable Learning the Structure of the World. Credit: Numenta

Source: phys.org