Can Google Health's AI interpret X-rays as well as radiologists?

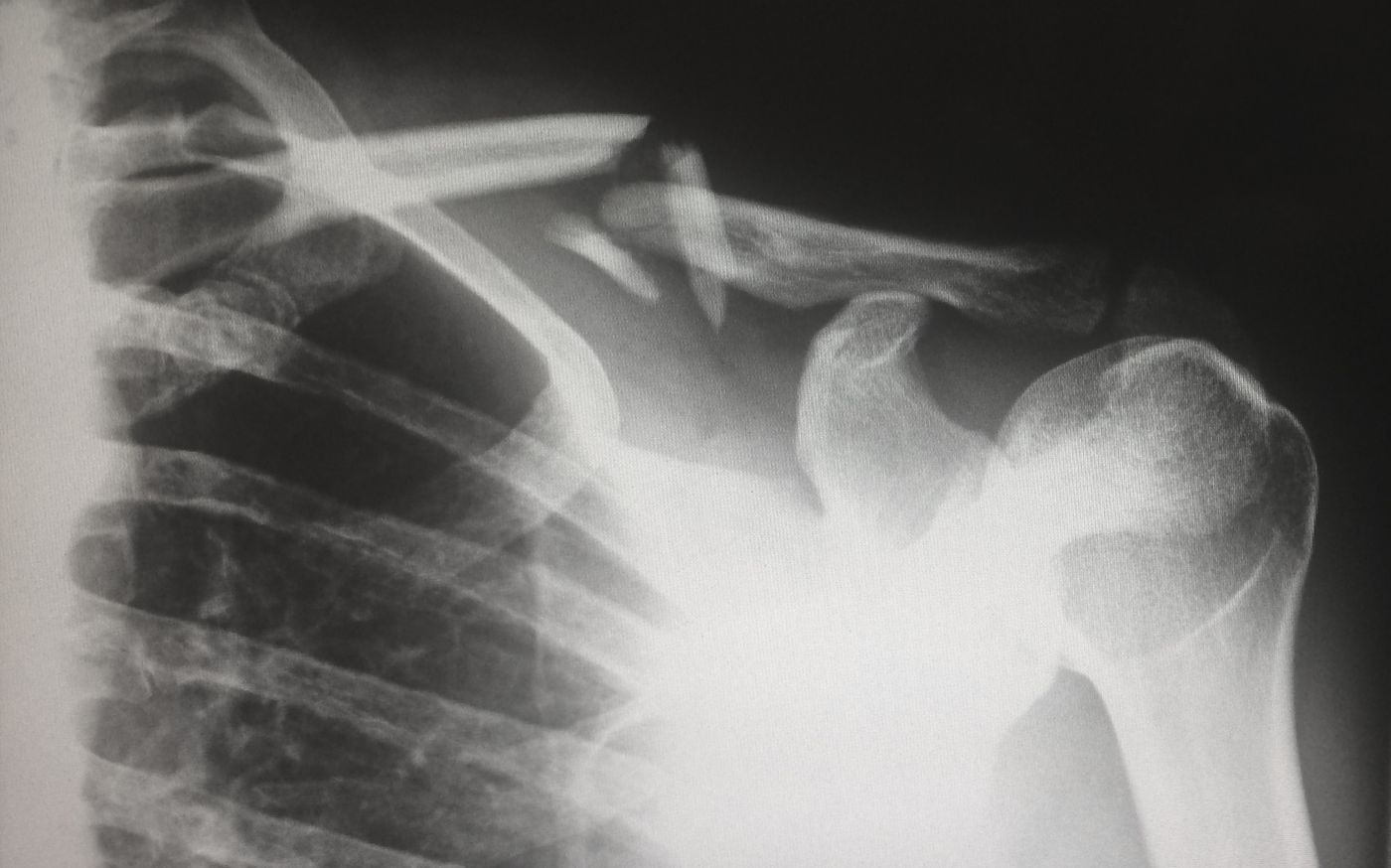

Patients presenting with severe coughs, chest pain or suspected infections are more than likely to be sent for a chest X-ray -- the most commonly taken medical image in hospitals. Radiologists use the images captured to take a closer look at what’s going on in the internal thoracic cavity, facilitating the diagnosis of conditions affecting the heart, lungs, and bones.

Medically interpreting these chest X-rays, however, isn’t always black and white. It’s a subjective and highly variable process, with conclusions dependant upon the quality of the images and the level of the radiologists' expertise.

For a trained medical professional, navigating the anatomy of the chest cavity takes just 5 minutes, as the video below suggests. Still, it can be more of an art than a science to correctly predict conditions based on subtle, sometimes masked patterns - leading to false positives, misdiagnoses or, worryingly, missed diagnoses.

In response, researchers at Google Health have turned to a type of artificial intelligence (AI) known as deep learning in the hopes of accelerating and enhancing the accuracy of X-ray interpretation.

Deep learning mimics how the human brain processes complex information to help guide decision-making when analyzing large datasets. Compared to human cognitive capabilities, deep learning has a tremendous edge -- able to rapidly decipher patterns hidden amidst unstructured and unlabelled raw data. On top of that, with machine learning, the software is able to teach itself and acquire the skills required to complete the task, all without any human involvement.

In a recent study published in the journal Radiology, software engineer Shravya Shetty and colleagues went about testing AI’s potential as a tool to empower radiologists. To do this, they primed the software by first inputting a database of over 800,000 chest X-ray images taken from real patients and healthy participants. A panel of 8 radiologists then layered on their clinical expertise into the system, assisting the software’s capacity to interpret patterns by defining reference standards for common chest abnormalities, such as pneumonia, cancer and broken bones.

When put to the test, deep learning models performed just as well as human radiologists, capable of correctly detecting patterns that should not be in a healthy chest cavity: tissue masses, fractures, or the presence of excessive fluid and gas.

This new development gives X-rays, discovered over 120 years ago, a much needed high-tech boost. Evidence continues to emerge reinforcing the potential that AI has for taking medical diagnostics to the next level, helping physicians to make faster, more accurate clinical decisions. Just last month, a similar technology proved to be useful in aiding radiologists to detect the presence of malignant lung cancers using chest X-rays.

Sources: Medical Xpress, Radiology.