Controlling a Robotic Arm With Brain Activity

When computer scientists and robotic engineers get together to create something, there is code, hardware and lots of other work to achieve what the project was meant to do.

Robots do not know instinctively how to perform some tasks, so they are either programmed, with thousands of lines of code that covers very specific actions, or there is artificial intelligence (AI) and algorithms involved that allow the robot to “think” at least in a very limited scope. It’s not an easy process to get a robot to do anything, even the simplest tasks take a lot of work.

Researchers at MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) know a thing or two about robotics and while the work is complex, their goal is to make it a bit simpler. They are working on a system where a user is connected to a robot and can correct any mistakes the robot makes during a task with a simple hand gesture or a brainwave pattern. The team has already had some success in this endeavor, getting robots to execute a “binary choice” task, where there are only two options. The more recent work attempts to up the ante to a multiple choice task.

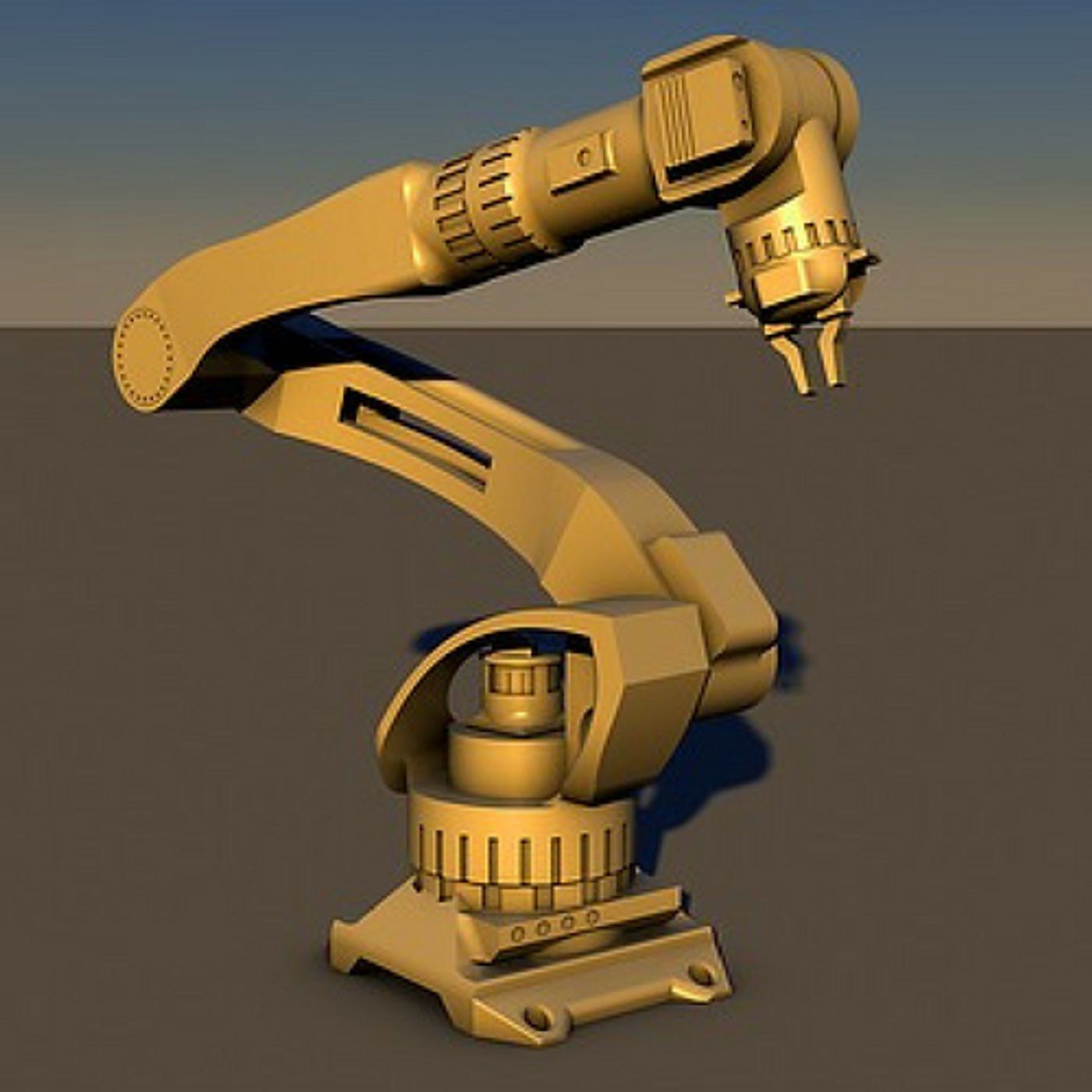

They’ve chosen a robotic arm that must move a power drill to a certain spot on a wooden plank they’ve set up in the lab. There are three possible targets on the board and the robot must figure out which to drill. A user is connected to the robotic arm and has electrodes reading brain activity and other sensors monitoring muscles in the forearm and hand. The user knows which spot the drill is supposed to hit. If it’s not getting the target, brain activity from the user indicates that there’s an error and corrects the arm’s movement with a combination of muscle movements in the hand that is read by the interface and brainwaves.

CSAIL Director Daniela Rus, who oversaw the work at MIT explained, “This work combining EEG and EMG feedback enables natural human-robot interactions for a broader set of applications than we've been able to do before using only EEG feedback. By including muscle feedback, we can use gestures to command the robot spatially, with much more nuance and specificity.”

The trick was to use brain waves called “error-related potentials” (ErrPs) which are signals in brain wave activity that show a user has detected an error. Rather than the software responding to a user thinking about a specific activity, the response to an ErrP is automatic. MIT Ph.D. candidate Joseph DelPreto is the lead author of the paper and explained, “What’s great about this approach is that there’s no need to train users to think in a prescribed way. The machine adapts to you, and not the other way around.”

It seems to work well too. Using a robot they named “Baxter” which was engineered by the company Rethink Robots, the team demonstrated an improvement in the robot hitting the right target. The score went from 70% without human supervision and rose to 97% accuracy with users connected to the interface. The goal moving forward is to make machines that can adapt to the user’s natural gestures and thoughts. The paper was presented at Robotics: Science and Systems in Pittsburgh. Check out the video to see the team and Baxter in action.

Sources: MIT Robotics: Science and Systems