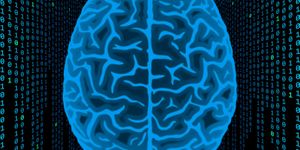

Remember that creepy feeling in 2001: A Space Odyssey when HAL observes Dave beginning a disconnection sequence and asks in a too-calm voice, "What do you think you're doing, Dave?"

The spine-tingling quality behind Stanley Kubrick's omniscient HAL 9000 computer was, of course, its self-directedness that mirrored the human will. It was perfectly symbolized in HAL's unblinking red eye that couldn't be tricked. Fourteen years after the scene in that movie was supposed to have occurred, researchers at Dartmouth have created a process by which this observational quality can occur through smart devices.

Effectively, the new technology allows TVs, smartphones, laptops, tablets, and other screen- or camera-including devices to talk to other devices using images-without the user having any knowledge of the communication occurring.

The system works by creating communication channels between the screens of smart devices that run unobtrusively and efficiently on the visible light spectrum.

Typically, if a device encodes the image on its display for any purpose, the viewer can tell because the display is disrupted by electromagnetic interference. In the Dartmouth researchers' system, referred to as HiLight, this interference is eliminated by enabling real-time reading and dynamic transmission of data to any device with a camera it's pointed to. It supports any normally viewable format, including video, gaming, web page, and movie content.

The system offers the exciting (and potentially scary) functionality of context awareness. Such real-time data would give applications the ability to gather and interpret information about, for example, your whereabouts, what direction you're facing and, potentially, what you're looking at.

Smart glasses could be one area where HiLight technology would be particularly exciting-consider the possibilities for augmented reality programming for security, education, medicine, travel, and communication, to name just a few fields that could benefit. It's not a far stretch to imagine having the power of real-time computing behind everything one looked at.

Xia Zhou, assistant professor of computer science, co-director of the DartNets (Dartmouth Networking and Ubiquitous Systems) Lab, and one of the study's authors, said: "Our work provides an additional way for devices to communicate with one another without sacrificing their original functionality. It works on off-the-shelf smart devices. Existing screen-camera work either requires showing coded images obtrusively or cannot support arbitrary screen content that can be generated on the fly. Our work advances the state-of-the-art by pushing screen-camera communication to the maximal flexibility."

The findings are to be presented May 20 in Florence, Italy, at MobiSys 2015, a conference for sharing research on the design and implementation of mobile computing and wireless systems, services, and applications.

Follow Will Hector on Twitter: @WriterWithHeart

(Source: Science Daily)