Montreal Forum Tackles Ethics in AI

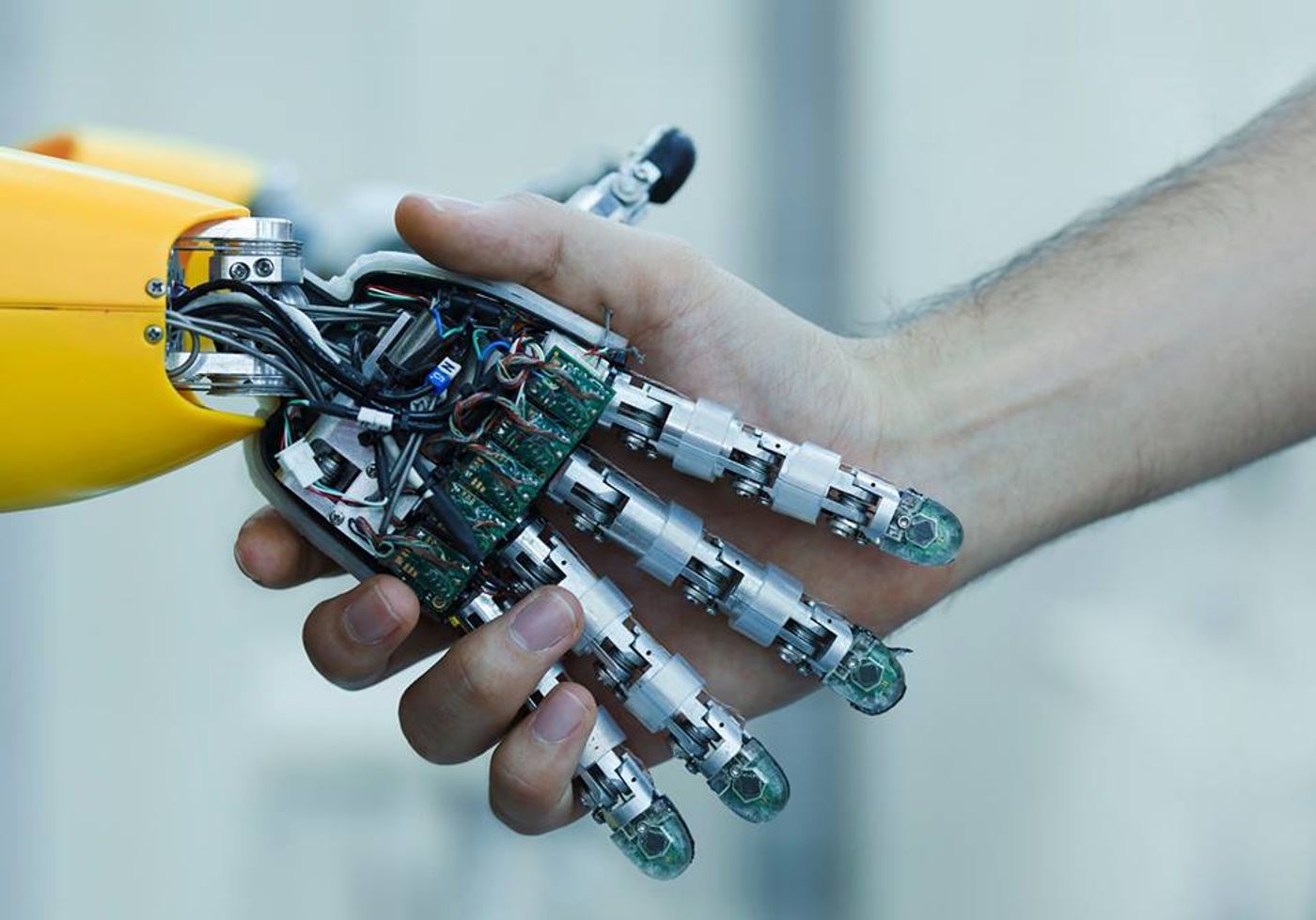

On Nov. 2 and 3, 2017 The Forum on the Socially Responsible Development of Artificial Intelligence (AI) was held in Montreal. The forum included about 400 international participants from the fields of AI, academics and government and tackled questions of cybersecurity, legal liability, moral responsibility and cultural bias in AI (intelligence displayed by machines) as well as its growing effect on the job market.The forum was organized by the Université de Montréal. It culminated with the release of a draft Montreal Declaration on these topics. Members of the public are now invited to comment on and contribute to the declaration-in-progress.

Decision Making, Bias and Culpability in AI

When a machine makes a faulty categorization or choice, using data it’s been fed by humans or has gathered from its environment through human-taught strategies, who is to blame? This is one of the queries participants in the forum explored.

The biases of the people who create data sets and algorithms to train software can play a pivotal role in the decisions technology makes. CBC gives a few examples in its report on the Montreal Forum. One is that in 2015, Google Photos labeled an image of a pair of Black people “gorillas.” Afterwards, it was revealed that Google used a collection of 1 million images to train its image recognition tool and that out of the 500 images of people, only two were black.

In another case of societal prejudice showing up in AI, ProPublica discovered racial biases in the risk assessments the criminal justice system in the U.S. uses to influence decisions such as a defendant’s bond or sentencing. It discovered that black individuals were being flagged at almost twice the rate as white ones as more likely to repeat a crime. Also, only 20 percent of the people predicted to commit violent crimes went on to do so. In 2014, then U.S. Attorney General Eric Holder worried these scores might “exacerbate unwarranted and unjust disparities that are already far too common in our criminal justice system and in our society.”

"Algorithms don't have bias. It's training data sets that have bias," says Abhishek Gupta, an AI ethicist and developer in Montreal.

Exploring how biases infiltrate AI ties into the question of whom to hold responsible for AI’s actions. AI Philosopher and New School Assistant Professor Peter Asaro doesn’t think companies creating AI should be self-regulating and believes AI legislation is needed. "But what regulations would be appropriate? In the auto industry, class-action lawsuits brought about safety changes like seatbelts. This is not as obvious in AI,” he tells CBC.

AI’s Effect on Jobs

With many types of work becoming more automated, the influence of AI and specialized machinery on the job market was also under the microscope at the forum.

"Say someone wants to be a radiologist. By the time they are done with studies, they might start to be replaced with automation,” Neurotechnology Entrepreneur Sydney Swaine-Simon told CBC.

He thinks AI needs to be made more accessible to people who are not already versed in the technology so that it can be better integrated into their careers and lives. He thinks being comfortable with AI could broaden the public’s understanding of how it will influence the future job market and global economy.

Bernard Marr of Forbes points out that AI may well be used to augment and assist rather than replace the human workforce. For example, he shares a survey the tech consulting firm Capgemini carried out with 1,000 organizations using AI-based systems. The survey revealed four out of five respondents said AI created more jobs. Two-thirds of the participants also said AI had not reduced jobs overall. Capgemini’s Head of Strategic Innovation Tom Ivory says “reskilling” is vital so that employees can be empowered to work alongside and better utilize AI.

The Montreal Declaration for a Responsible Development of Artificial Intelligence seeks to address these difficult issues and its first rendition recommends ethical guidelines for the development of AI. The guidelines focus on these seven values and related proposed principles:

Well-being: Proposed principle: The development of AI should ultimately promote the well-being of all sentient creatures.

Autonomy: Proposed principle: The development of AI should promote the autonomy of all human beings and control, in a responsible way, the autonomy of computer systems.

Justice: Proposed principle: The development of AI should promote justice and seek to eliminate all types of discrimination, notably those linked to gender, age, mental / physical abilities, sexual orientation, ethnic / social origins and religious beliefs.

Privacy: Proposed principle: The development of AI should offer guarantees respecting personal privacy and allowing people who use it to access their personal data as well as the kinds of information that any algorithm might use.

Knowledge: Proposed principle: The development of AI should promote critical thinking and protect us from propaganda and manipulation.

Democracy: Proposed principle: The development of AI should promote informed participation in public life, cooperation and democratic debate.

Responsibility: Proposed Principle: The various players in the development of AI should assume their responsibility by working against the risks arising from their technological innovations.

University of Monterrey Philosophy Professor Marc-Antoine Dilhac hopes the Montreal Declaration “will help guide public decision-makers” and considers it “a wonderfully democratic project." Further discussion will be initiated and invigorated by interactive gatherings in Canada, including panels, workshops and citizens’ meetings. The Declaration will remain open to change and the public can view or contribute input online.