Playing Blind: A Video Game Played via Direct Brain Stimulation

Plugging inputs into the brain in order to control a person or give them super powers is very much in the realm of science fiction. However, the field of direct brain stimulation is very real in cutting edge neuroscience research and a team at the University of Washington may just have found the first small building blocks of the connection between humans and machines. In other words, it’s not just for the movies any more and Keanu Reeves might have company in his virtual environment

The team at the UW Center for Sensorimotor Neural Engineering was the first research group to demonstrate that humans could navigate a virtual environment and play a simple game, all with no visual cues, just direct brain stimulation. Their research paper was published last month in the journal Frontiers in Robotics and AI and it’s getting a lot of attention.

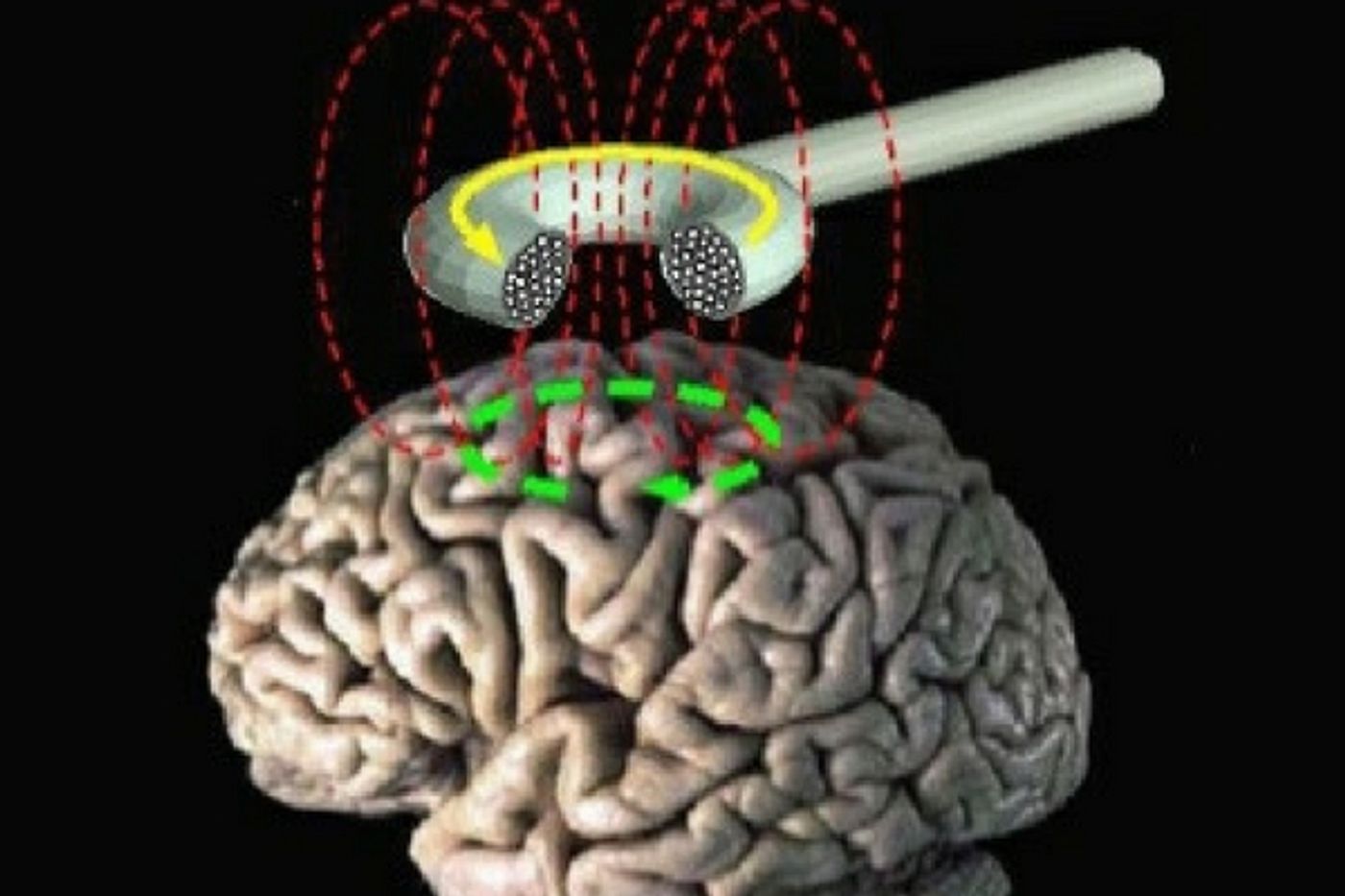

Test subjects involved in the study were tasked with navigating 21 different computer mazes. They were relatively simple, requiring only that the player move forward or back based on stimulation that wasn’t visual but rather came directly through the brain by the research team. The stimuli was an artifact called a phosphene. It’s created via transcranial magnetic stimulation which is a magnetic coil placed near the skull and set to directly stimulate certain areas of the brain. The study subject can sense the phosphenes as they are perceived as blobs or bars of light and in this game they were the only clues to players about which way to go.

Senior author Rajesh Rao, UW professor of Computer Science & Engineering and director of the Center for Sensorimotor Neural Engineering stated in a press release, “The way virtual reality is done these days is through displays, headsets and goggles, but ultimately your brain is what creates your reality. The fundamental question we wanted to answer was: Can the brain make use of artificial information that it’s never seen before that is delivered directly to the brain to navigate a virtual world or do useful tasks without other sensory input? And the answer is yes.”

The study was small, with only five test subjects, but when they received guidance from the magnetic coil brain stimulation they made the correct moves 92% of the time compared to only 15% of the time when they had no input. The game and the subjects nearly perfect performance in it is being seen as a step towards artificial intelligence environments and cranial stimulation being used to convey information to humans that they can perceive and act on. The test subjects also got better at the navigation task over time, suggesting that they were able to learn to better detect the artificial stimuli.

Lead author Darby Losey, a 2016 UW graduate in computer science and neurobiology who now works as a staff researcher for the Institute for Learning & Brain Sciences (I-LABS) explained, “We’re essentially trying to give humans a sixth sense,” said “So much effort in this field of neural engineering has focused on decoding information from the brain. We’re interested in how you can encode information into the brain.” The team feels certain that the technology can be increased to include more complex environments and stimuli over the very simple binary information that was used in this experiment. Possible applications could be for deaf or visually impaired people to use the technology to make navigating the world safer for them.

Researchers on this project have partnered with others not on the UW campus to form a start-up company called Neubay to use direct brain stimulation and artificial intelligence to make gaming applications, assistive devices and other gear. It’s early days yet, but with the results from this one experiment the team is confident there is nowhere to go but up. Check out the video to see more about how it works.

Sources UW Today, New Atlas , Frontiers in Robotics and AI