Cracking the Facial Recognition Code

Every day as we navigate around our homes, neighborhoods and workplaces, we see people we know. A friendly wave to a coworker or a quick chat with a shopkeeper and we are connected to our environment. How do we keep everyone straight though? Recognizing faces isn’t the same as learning where things are and how to get to places we visit often. The brain’s way of recognizing who is who is both simple and incredibly detailed. New research from CalTech has revealed the mechanism behind how the brain works to accomplish this particular task. With this information, the hope is that someday by monitoring brain activity, it might even be possible to reconstruct what they are thinking about as they look at their surroundings.

Doris Tsao, a CalTech professor of biology and the leadership chair and director of the Tianqiao and Chrissy Chen Center for Systems Neuroscience, led the research at the and Howard Hughes Medical Institute and published a paper in the journal Cell. The main take away from the research was that while there are millions of combinations of noses, eyes, ears, chin, cheeks and lips that make up a face, the brain uses about 200 specific neurons to encode any face, with each neuron looking at a certain axis or dimension of a face. The researchers likened it to basic colors colors in a prism of light (red, green and blue) that when combined, can create any one of thousands of custom colors. They dubbed this spectrum of faces “the face space.”

These 200 neurons divide up the work of encoding facial features like a well-organized assembly line. Whether it’s the shape, the size of the eyes, distance from the nose to lips or any of several other muscular and skeletal facial features, each neuron responds in a specific way to contribute data to the whole. Stronger neural responses happen with larger features and weaker responses with smaller features, but it’s not as if each nerve cell is naming the features as in “nose” “eyes” or “lips.” It’s more like a general direction of points. Taking all of the points together, along with the direction in which they are mapped by the neurons is what allows the brain to recognize an individual face and match it to a person.

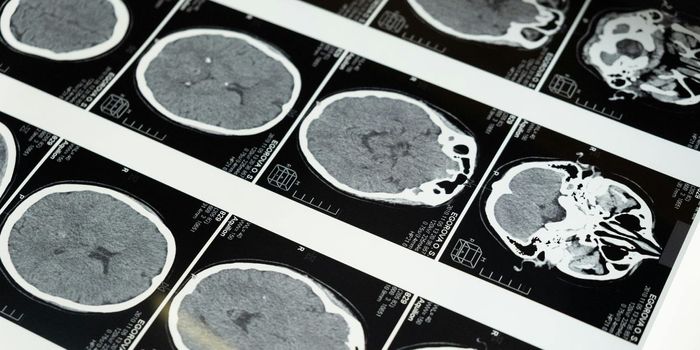

Building on her previous research in 2003, that involved areas of brain activity in monkeys as they view faces, Tsao wanted refine what the cells in the brain were actually revealing when they would activate after seeing particular features like eyes, noses and hair. In a press release, Tsao explained, "This new study represents the culmination of almost two decades of research trying to crack the code of facial identity. It's very exciting because our results show that this code is actually very simple." When comparing the earlier work to the most recent study she said, “These results were unsatisfying, as we were observing only a shadow of what each cell was truly encoding about faces. For example, we would change the shape of the eyes in a cartoon face and find that some cells would be sensitive to this change. But cells could be sensitive to many other changes that we hadn't tested. Now, by characterizing the full selectivity of cells to faces drawn from a realistic face space, we have discovered the full code for realistic facial identity.”

The results were tested using the same theories used in linear algebra, with mult-dimensional vectors being projected onto a single dimension subspace. By calculating the null space, the difference between each quantity, the team was able to develop algorithms that when combined with monitoring of the brain activity of lab macaque monkeys, could recreate what the monkey was seeing with a high degree of accuracy.

Take a look at the video to learn more about different applications of this new research.

Sources: CalTech, NY Times Journal Cell