Brain-Inspired Computing

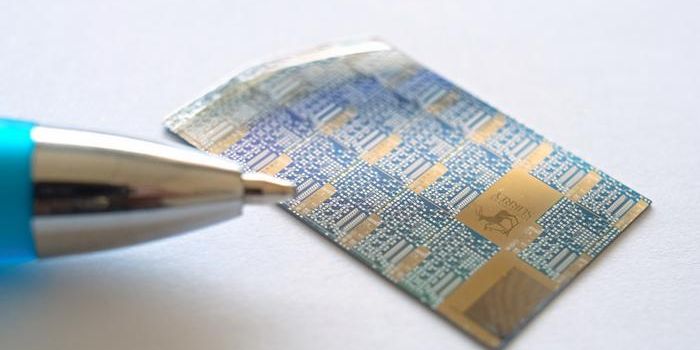

The invention of the transistor, which lets a weak signal control much larger flow, was developed in 1947 and since its development computing has been on the rise doubling the number of transistors that can fit on a chip. The trend was referred to as the Moore's Law. As a result, present day research on computing has been entirely focused on operational functions. One such study was geared to using operational functions to examine computing landscapes and advance brain-inspired neuromorphic computing

Learn more about the history of computers:

"The future of computing will not be about cramming more components on a chip but in rethinking processor architecture from the ground up to emulate how a brain efficiently processes information," says study author, Suhas Kumar of Hewlett Packard Labs,

The study was published in Applied Physics Reviews and discusses integrating hybrid architectures made of digital and analog analog architectures as a possibility by something known as memristors—which are essentially resistors with memory that can process information directly where it is stored.

"Solutions have started to emerge which replicate the natural processing system of a brain, but both the research and market spaces are wide open," added also another fellow author, Jack Kendall of Rain Neuromorphics.

The research implies that computers need to be reinvented because they lack the ability to effectively scale. By mimicking the neural architecture of the human brain, computers can perform tasks with a great deal of complexity. As such, authors are predicting a future of neuromorphic computing—as early as this decade.

"Today's state-of-the-art computers process roughly as many instructions per second as an insect brain," notes the authors.

Sources: San Jose State University, Applied Physics Reviews, Science Daily