Humans and Computers Talk Silently With New MIT Headset

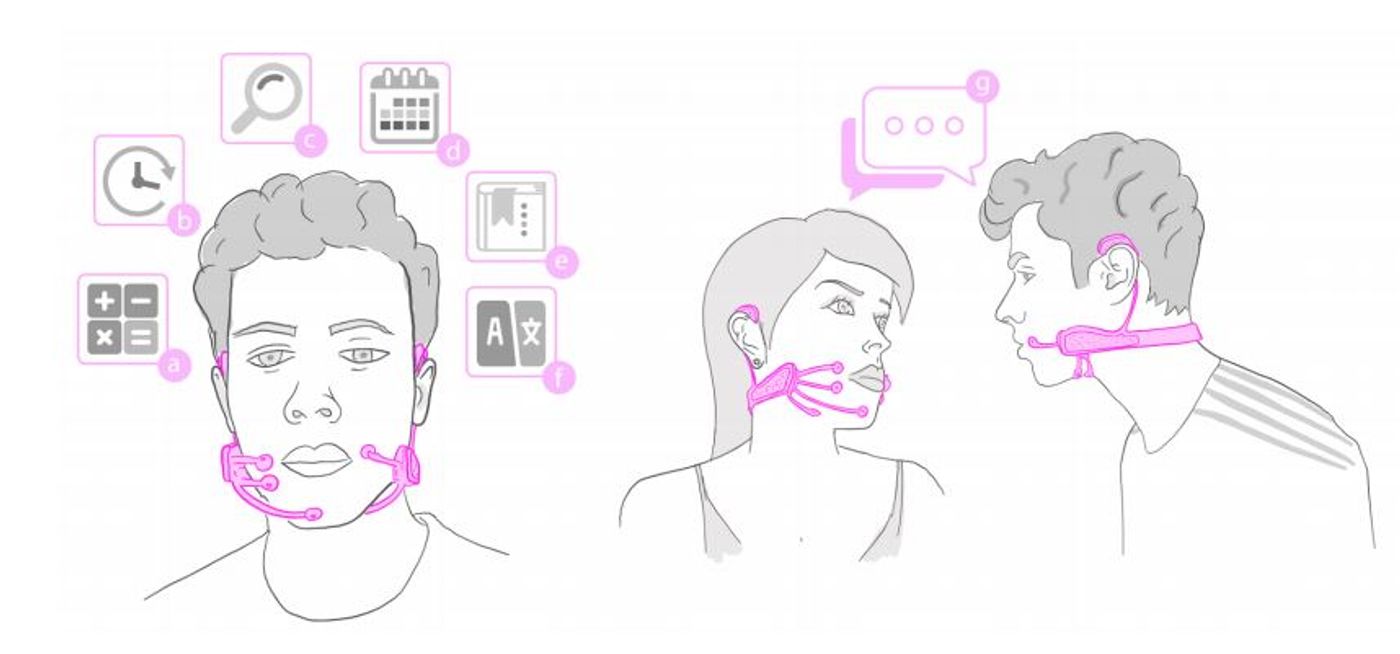

Internal verbalization – silently speaking in one’s head but not aloud – is the basis for MIT’s new intelligence augmentation (IA) device called the AlterEgo. This “silent speech interface” allows the user to communicate with a computer or other person in complete silence by talking internally and wearing a headset. While a person speaks in thier mind, AlterEgo uses electrodes to pick up on neuromuscular signals originating in the face and jaw, which are undetectable to the human eye, and correlates them with words. It has a wide range of possible applications, ranging from daily interaction with online media while multitasking, to improving communication abilities for the speech impaired, to allowing people in loud, dangerous or covert situations to have completely silent and private conversations. MIT researchers envision it as the basis for an integrated human-computer “second self.”

“The motivation for this was to build an IA device … Our idea was: Could we have a computing platform that’s more internal that melds human and machine in some ways and that feels like an internal extension of our own cognition?” MIT Media Lab grad student and lead author of the corresponding paper, Arnav Kapur, said.

The system also uses bone conduction headphones to send information back to the user, enabling a two-way silent conversation. These headphones deliver vibrations to the inner ear through the bones of the face without blocking the ear canal, and users can still have separate verbal and auditory experiences while wearing them.

Early studies show the device has a transcription accuracy rating of about 92 percent, and Kapur thinks it can go much higher with more training data.

“I think we’ll achieve full conversation someday,” said Kapur. The current least-obtrusive model of the headset has curved appendages that bring four electrodes into contact with one jaw.

“AlterEgo aims to combine humans and computers – such that computing, the internet and AI would weave into human personality as a ‘second self’ and augment human cognition and abilities,” an MIT video on the tech states.

AlterEgo uses a neural network made up of layers of processing nodes that work together on classification tasks, in this case

In this study, 10 people spent 15 minutes with the device for customization and then 90 minutes for execution trials. The tasks involved about 20 words and centered on arithmetic and chess. In the chess experiments, subjects were able to noiselesssly describe their opponent’s moves and then get silent advice from the computer on how to respond.

Thad Starner, a professor at Georgia Tech’s College of Computing, told MIT News he sees a diverse group of uses for this tech, including in loud environments like airport runways and in factories. He also points out that people with voice disabilities, as well as those working in special ops, might benefit from this device in the future.

MIT Professor of media arts and sciences Pattie Maes, who is Kapur’s thesis adviser, thinks it will be helpful in daily life performing the types of functions for which we now turn to cellphones or other devices. For example, she imagines people using it to look something up online while remaining engaged in a conversation.

In the video below, AlterEgo's multifunctionality is demonstrated in everyday scenarios; in silently asking the time, adding up purchases, or as a quiet, hands-free controller for streaming TV selections.

Source: MIT News