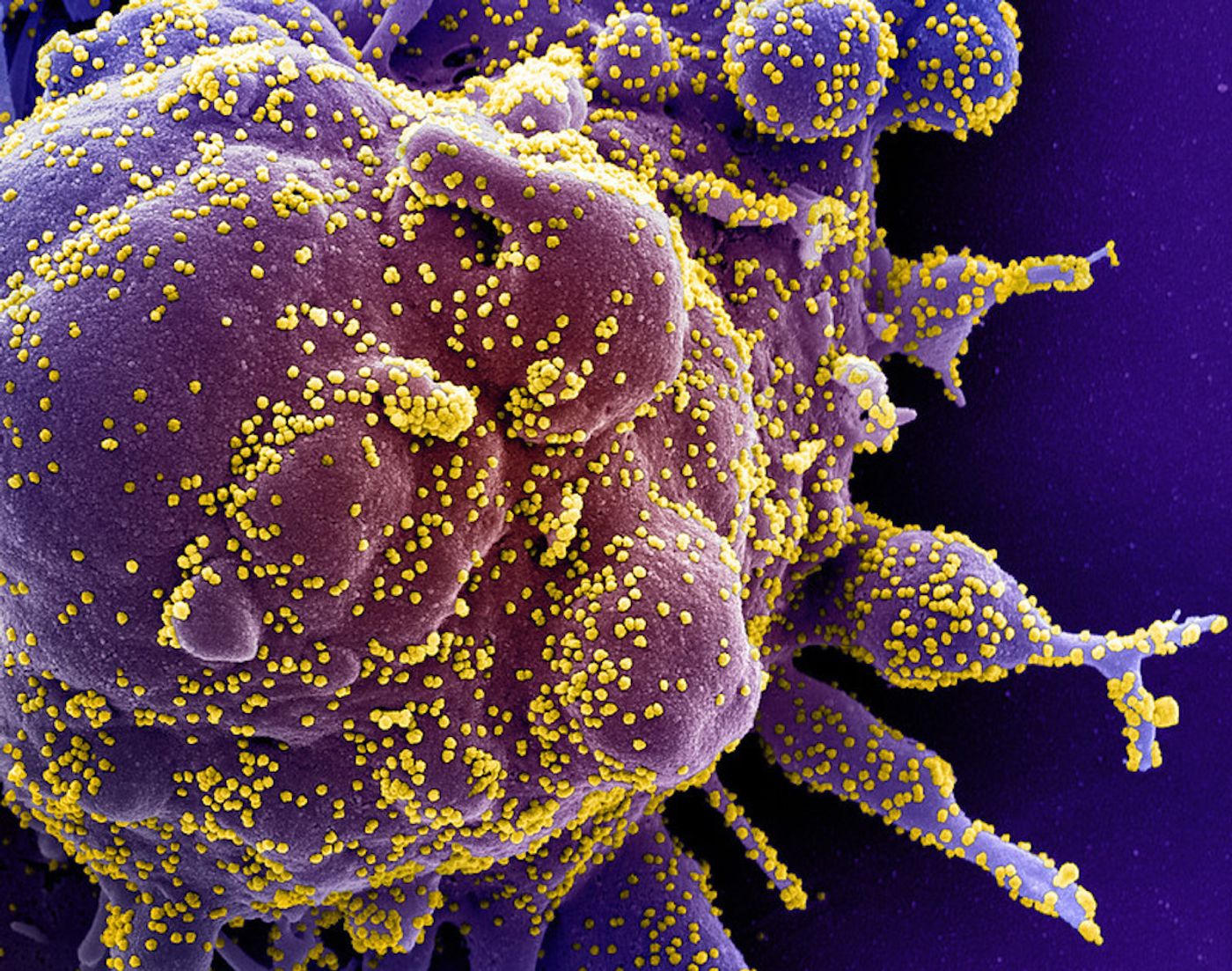

Concerns Remain About the Accuracy of COVID-19 Tests

Diagnostic tests have recently been getting a lot of attention because of the COVID-19 pandemic. In the United States, it's thought that there were major setbacks in our ability to deal with the threat posed by the virus in part because diagnostic testing was not widely available, and the tests that were being performed weren't always giving the correct result. The Food and Drug Administration (FDA) allowed about 70 tests to rush to market, without carefully vetting their accuracy.

If widespread, reliable testing had been set up in time, it may have been possible to identify the infected people in the country and trace those they'd come into contact with before the outbreak became so widespread. Now, the infection has stricken so many communities, it's not only important to be able to identify those that have an active infection, but we will also want to know who has already been infected and may now be immune to the virus. It will of course be important for these test results to be reliable.

NBC News has reported that incorrect results have been missing up to twenty percent of positive COVID-19 cases. Problems with sample collection may be to blame; if the virus has already migrated from the nose to the lungs, a nasopharyngeal swab would miss it. The FDA has removed many tests from the market now that they have had a chance to examine some of them more closely, and they are warning about the accuracy of the Abbott ID NOW rapid test.

New research reported in the New England Journal of Medicine has examined how accurate diagnostic tests are. There are two major ways to judge a test. A test's sensitivity is the likelihood that a positive result will come from an infected person. If the sensitivity is low, there will be too many negative tests for people who are actually infected, e.g. lots of false negatives. A test's specificity is the likelihood that a negative result will be produced by a person who is not infected. If the specificity is low, there will be false positives: an uninfected person could get a positive result. An interactive graph published with the report shows how variations in these characteristics impact the reliability of results.

"Diagnostic tests, typically involving a nasopharyngeal swab, can be inaccurate in two ways," explained lead study author Steven Woloshin, M.D., M.S., a professor of medicine and community and family medicine at Dartmouth's Geisel School of Medicine, and of The Dartmouth Institute for Health Policy and Clinical Practice. "A false-positive result mistakenly labels a person infected, with consequences including unnecessary quarantine and contact tracing. False-negative results are far more consequential because infected persons who might be asymptomatic may not be isolated and can infect others."

The report noted that current COVID-19 diagnostic tests have variable sensitivities and there is no standard way to validate all tests, which is a problem. Several large studies have already shown that tests are producing a lot of false-negatives.

"Diagnostic testing will help to safely open the country, but only if the tests are highly sensitive and validated against a clinically meaningful reference standard--otherwise we cannot confidently declare people uninfected," added Woloshin.

The study authors noted that the FDA should require manufacturers to disclose their sensitivity and specificity when they are put on the market.

"Measuring the sensitivity of tests in asymptomatic people is an urgent priority," said Woloshin. "A negative result on even a highly sensitive test cannot rule out infection if the pretest probability--an estimate before testing of a person's chance of being infected--is high, so clinicians shouldn't trust unexpected negative results."

Such a probability may depend on the infection rate COVID-19 where a person lives, their symptoms, and history of exposure, he added.

Sources: AAAS/Eurekalert! via The Geisel School of Medicine at Dartmouth, New England Journal of Medicine