The concept of being able to “read” the thoughts in someone’s brain has been the subject of science fiction stories many times. Some scanning techniques can show which areas of the brain are activated when a person reads, listens to music or performs a task requiring concentration but so far, there is no way to read the brain as if a little speech bubble appeared over it. Scientists may have a way to decode thoughts and language however, with a new study conducted at the University of California, Berkeley.

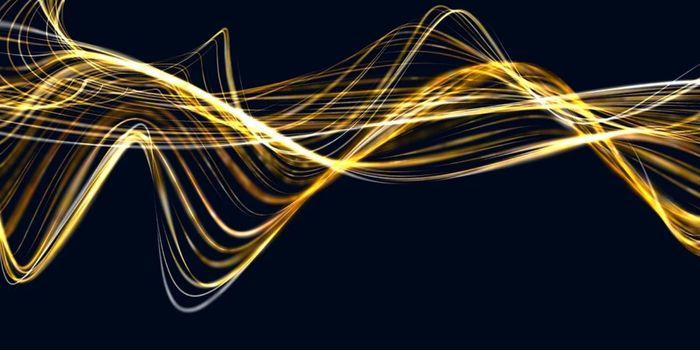

Calling it a “semantic atlas” the team has finished a brain imaging study that shows the brain lit up in bright colors according to how language is processed. The imaging scans were done with participants spending hours in functional MRI machines which recorded neural activity while they listened to stories from “The Moth Radio Hour.” In mapping the areas that responded to language the team showed that about 1/3 of the brain’s cerebral cortex is involved in decoding the language and words we hear.

The team noted that different test subjects had similar brain mapping, so the technology could be adapted for certain groups of patients, such as those with ALS or other disorders that impact speech and language. Alex Huth, a postdoctoral researcher at UC Berkeley and study lead author said in a press release, "The similarity in semantic topography across different subjects is really surprising. This discovery paves the way for brain-machine interfaces that can interpret the meaning of what people want to express. Imagine a brain-machine interface that doesn't just figure out what sounds you want to make, but what you want to say. For example, clinicians could track the brain activity of patients who have difficulty communicating and then match that data to semantic language maps to determine what their patients are trying to express. Another potential application is a decoder that translates what you say into another language as you speak"

The imaging in the study recorded the blood flow to different areas in the brain as they listened to stories told by people that detail some of their life experiences, some of which are funny, some are more serious. The participants' brain imaging data were then matched against time-coded, phonemic transcriptions which detail specific sounds as they relate to each other. That information was then evaluated mathematically in an algorithm that scored words on how similar they are to other words.

This information was then used to create a “thesaurus like” map of the brain, with words categorized into types like locational, mental, abstract, violent etc. and then diagrammed in the areas of the brain that processes those particular sounds.

While it was notable that some similarities existed in groups, the team stressed that more research was needed to fine tune how individuals process language and for that a larger and more diverse study group in needed. The following video talks about the study and how the National Science Foundation hopes the information can help those struggling with language based difficulties.

Sources

National Science Foundation,

Nature,

UC Berkeley